The EM Algorithm for Nick Carter

Famous investigator Nick Carter got into troubles while solving a case of

mysterious disappearance of lady Thun's dog. The only clue he was able to obtain

was a set of photographs of the likely villain. Unfortunatelly, the photographs

are of so low quality, that they are almost unreadable.

How is Nick now missing his 33RPZ seminars, which he was light-headedly

skipping during his studies. Well, it's now too late to feel sorry about it. On

his own, he is unable to exploit the photographs he have and successfully solve

the case. Fortunatelly, his old friend, inspector Kidney, got a bright idea, and

told him about you, our talented students.

So there we have Nick, in a dire need of your help. Can you help him to

reveal the true identity of the villain?

Figure 1. Nick Carter pondering the EM algorithm

The clues

So the unfortunate Nick has m corrupted images of Himg

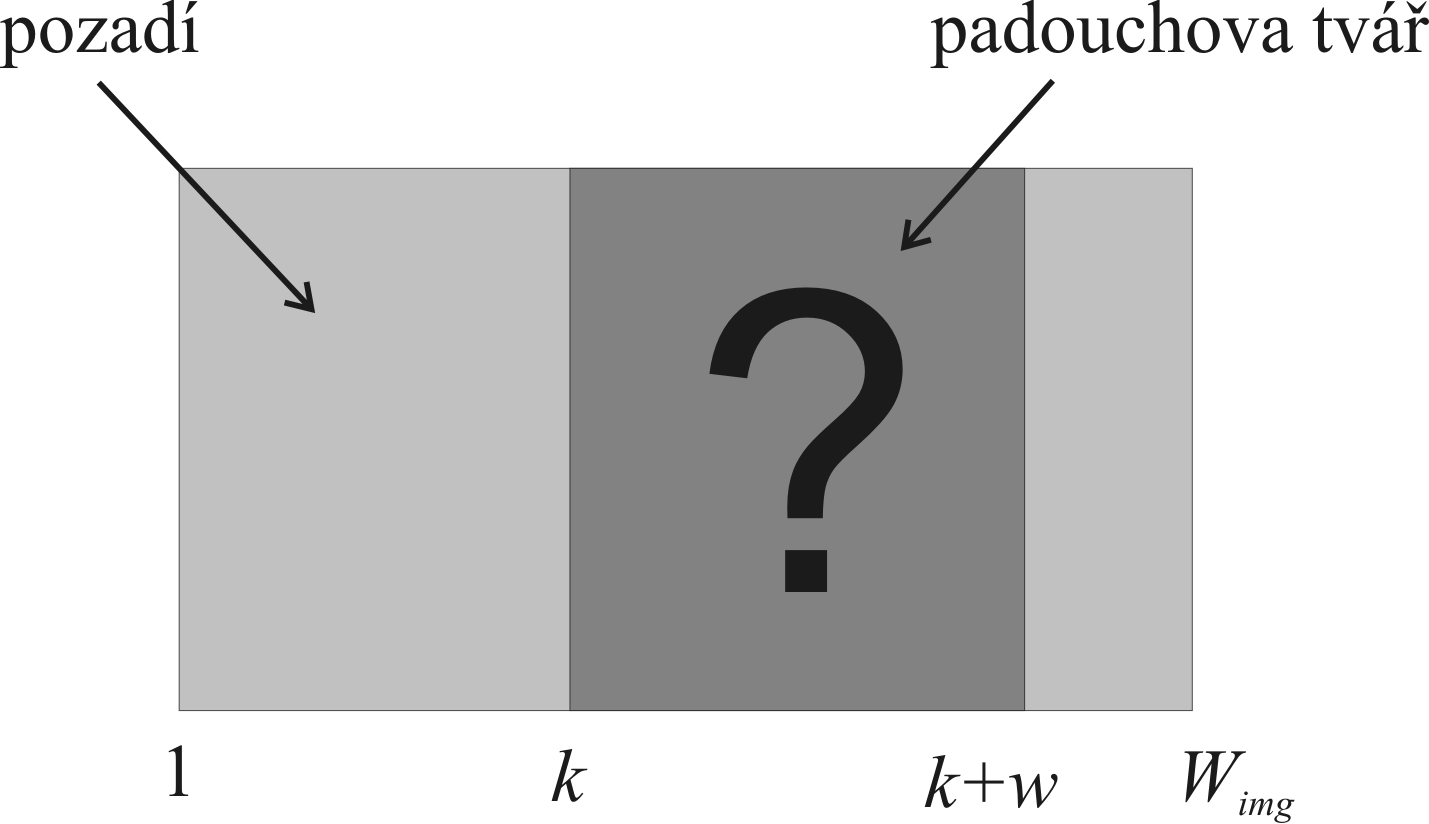

x Wimg pixels (Figure 2). He knows, that each image depicts

the face of the villain. He suspects, that the faces do not occupy whole images

- the image size of a face is only Himg x w

pixels. I.e. a face spans whole height of the image, but is narrower.

Thus, only the horizontal position of the face varies in the images (i.e. we can

assume that horizontal position k is the only unknown). We also know that

backround of the images (i.e. the image area outside the face itself) is

constant - has constant intensity b. See figure 3. The images are very

noisy (assume gaussian noise distribution here).

Figure 2. One of the Nick's images

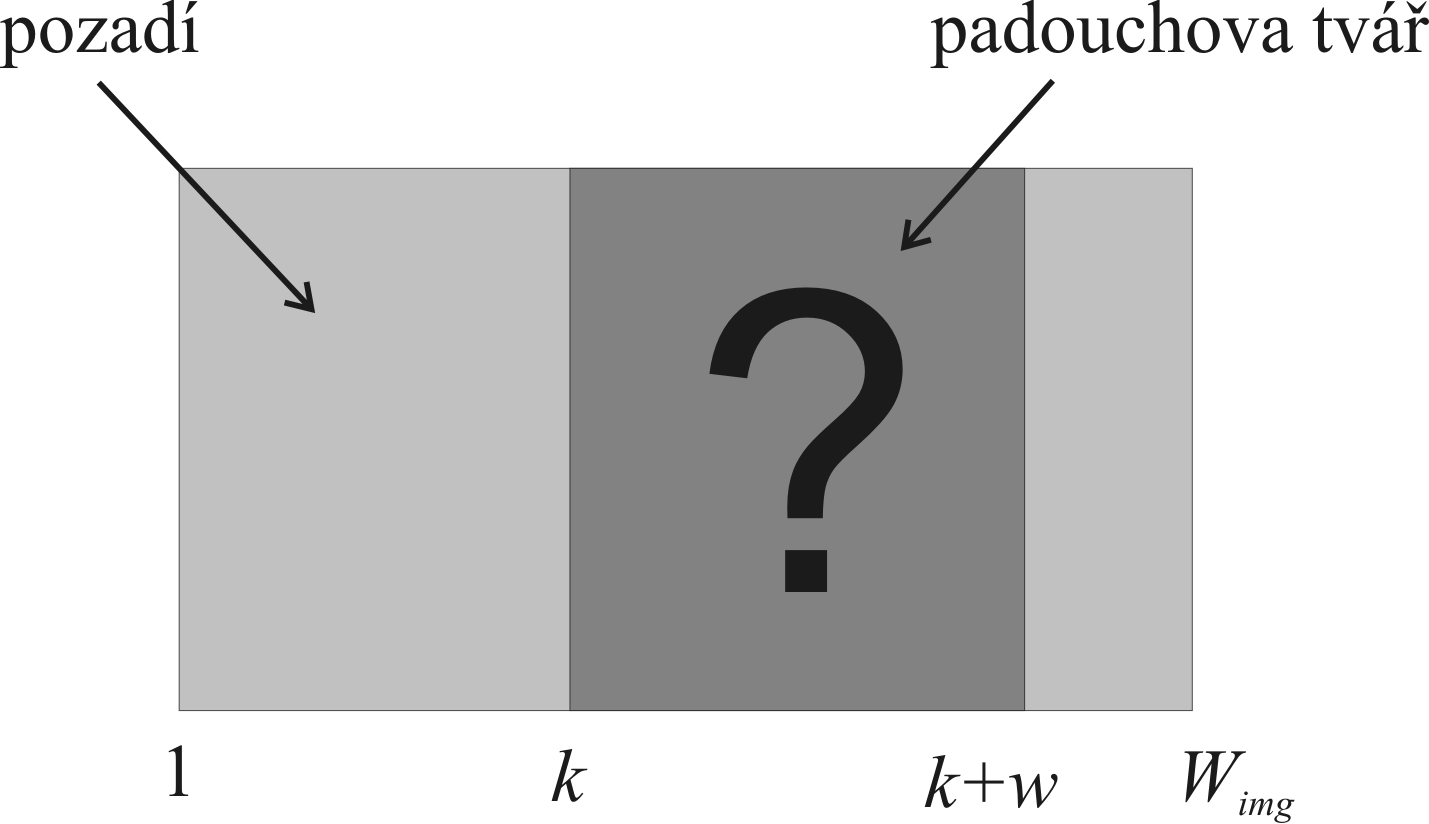

Figure 3. Image composition. The face is at an unknown horizontal position

k.

The stochastic model

We have a set of m images {X1,

X2, ... , Xm}, of Himg ×

Wimg pixels. Xis

are the images, each has n = Himg

* Wimg pixels. In each image there is the villain's face F

at position k. The face dimension is Himg

x w pixels, pixels outside the face have constant intensity b.

Each pixel Xi(r,c), i.e.

pixel in i-th image at position (r, c), is observed with a

gaussian noise N (0,σ). Therefore,

the probability of observing value Xi(r,c),

assuming face at position ki,

is:

- for background pixels (for c:

1 <= c < ki OR ki+w

<= c <=Wimg ):

P(xi(r,c)

| ki) = N(b, σ)

- for face pixels (i.e. for c:

ki

<= c < ki+w ):

P(xi(r,c)

| ki) = N(F(r,c-ki+1), σ)

.

The probability of observing image Xi,

assuming face at position ki,

can be written as a product over all pixels:

P( Xi | ki ) = Πr

Πc P( xi(r,c) | ki) .

The probability of observing the set of all m images P( {X1,...,Xm} )

is

P( {X1,...,Xm} ) = Πi

P( Xi) = Πi Σk P( Xi , k) =

Πi Σk P(k) P( Xi | k) .

Taking the logarithm, we obtain the likelihood of the image set: L = log P( {X1,...,Xm} ) .

As Nick Carter correctly guessed, the most likely estimate of the villain's face

F can be obtained using the EM algorithm. Hidden parameters are here the

face positions ki ,

i=1,..,m in the images.

EM Formulation

The EM algorithm will be used for finding the Maximal Likelihood (ML)-estimate

of parameters F, b, σ

(F, b, σ) = argmax P({X1,...,Xm}|F,

b, σ).

The hidden parameters are the face positions ki.

The EM algorithm iteratively executes in two steps, E-step and M-step:

- The E-step:

In the E-step, coefficients α(k,i) are

computed for current estimates of F, b, σ:

α(k,i)

= P(k) * P(Xi | k) / Σk

[ P(k) * P(Xi

| k) ] ,

where k =

1,...,Wimg-w+1,

i =

1,...,m.

The coefficients α(k,i)

represent estimates of probability P(ki|Xi).

In other words, here we estimate the probability, that i-th image

contains the face F at position ki.

The probability is evaluated for all images and for all possible positions.

- The M-step:

In the M-step, parameters P(k), F,

b, and σ are estimated (by

maximising the lower bound of the likelihood) for current estimates of α(k,i).

The probability P(k)

is computed as the average of α(k,i)

over all images i.

P(k)

= Σi α(k,i) / m ,

where k = 1,

..., Wimg

- w + 1, and the sum goes over i

= 1, ..., m. The remaining parameters F, b, σ

are estimated by maximising

(F,

b, σ) = argmax Σi Σk

α(k,i) * log P(Xi

| k, F, b, σ) ,

(1)

k = 1,

..., Wimg -

w + 1, and the sum again goes over i = 1, ..., m.

The equations for F, b, σ are

obtained by setting respective partial derivations of eq. (1) to zero. We

get

F

= [

Σi Σk α(k,i) * Xi

(:, k:k+w-1)

] / m

b

= [

Σi Σk α(k,i) * S(k,i)

] / [ m

* (n-Himg*w)

] ,

σ2 = [ Σi Σk

α(k,i) * ( A(k,i) +

B(k,i) ) ] / (m*n)

,

where

S( k,i) = Σr

Σc

Xi( r,c) , r=1,..., Himg , c=[1:k-1,

k+w:Wimg]

A(k,i) = Σr

Σc [

Xi

( r,k+c-1)

- F(r,c) ]2

, r=1,...,Himg

, c=1,...,w

B( k,i) = Σr

Σc [

Xi( r,c) - b ]2

, r=1,...,

Himg

, c=[1:k-1, k+w:Wimg]

( Try to derive the equation for b.

Set the partial derivation of eq. (1) to zero, and express b.

)

The tasks

- Go through the EM algorithm.

- Try to derive the equation for b (the background intensity) in the

M-step.

- Use the EM-algorithm to obtain the maximally likely estimate of the

villain's face F. In each iteration, display the actual estimate of F

along with the likelihood of the estimate. The input images can be

downloaded here from image_data.mat.

The datafile contains 500 images of 45x60 pixels. The face width w is

expected to be 36 pixels. Compute the estimate for

increasing m = 10,100,500.

-

In the first 10 images, mark the face position ki

which you have found, ki

= argmax ki

P( ki

| Xi).

Figure 4. The mostlikely face position

k1in the first

image.

Hint

In the E-step, it is reccommended first to sum up all the exponents of the

gaussian distributions, which occur in the products. Also, to avoid rounding

errors in the exp function, it is reccommended, before the division takes place,

to multiply both the numerator and the denumerator of the fraction by a large

constant (i.e. by adding a large number to the exponents).

References

[1]

Expectation-maximization algorithm (Wikipedia).

[2] Michail I. Schlesinger, Vaclav Hlavac. Ten Lectures on Statistical and Structural Pattern Recognition. Kluwer Academic Publishers, 2002.

Created

by Martin Urban, last update 18.7.2011