|

|

|

|

Dmytro Mishkin, Michal Perdoch, Jiri Matas, Karel Lenc

| MODS: | [pdf] |

@article{Mishkin2015MODS,

title = "MODS: Fast and robust method for two-view matching ",

journal = "Computer Vision and Image Understanding ",

volume = "",

number = "",

pages = " - ",

year = "2015",

note = "",

issn = "1077-3142",

doi = "http://dx.doi.org/10.1016/j.cviu.2015.08.005",

url = "http://www.sciencedirect.com/science/article/pii/S1077314215001800",

author = "Dmytro Mishkin and Jiri Matas and Michal Perdoch",

keywords = "Wide baseline stereo",

keywords = "Image matching",

keywords = "Local feature detectors",

keywords = "Local feature descriptors "

}

|

[source] | [datasets] | [paper] |

| MODS-WxBS: | [pdf] |

@InProceedings{Mishkin2015WXBS,

author = {{Mishkin}, D. and {Matas}, J. and {Perdoch}, M. and {Lenc}, K. },

Booktitle = {Proceedings of the British Machine Vision Conference},

Publisher = {BMVA},

title = "{WxBS: Wide Baseline Stereo Generalizations}",

year = 2015,

month = sep,}

|

[poster] | [source] | [datasets] |

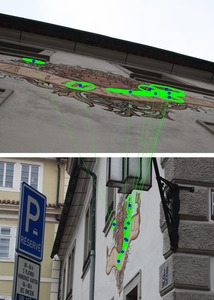

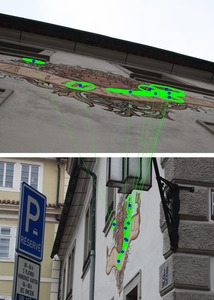

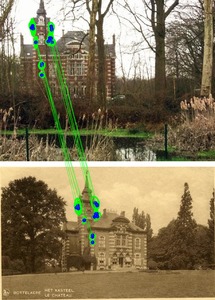

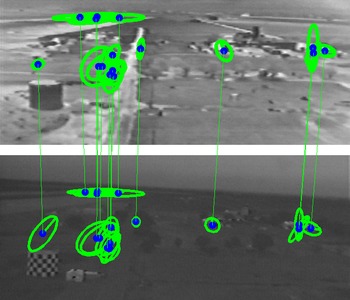

MODS (Matching On Demand with view Synthesis) is algorithm for wide-baseline matching. It matches image with extreme view point changes, which are not matchable with previous state-of-art ASIFT matcher. MODS is fast for simple, robust on hard matching problems because of the use of progressively more time-consuming feature detectors and by on-demand generation of synthesized images that is performed until a reliable estimate of geometry is obtained.

|

|

|

|

| Matcher/Dataset | VGG-Aff | EF | SymBench | MMS | GDB-ICP | UW | EVD | EZD | Lost in Past | WxBS |

| #Image pairs | 40 | 33 | 46 | 100 | 22 | 30 | 15 | 22 | 172 | 31 |

|---|---|---|---|---|---|---|---|---|---|---|

| MODS | 40 | 33 | 42 | 27 | 18 | 9 | 15 | 12 | 94 | 6 |

| MODS-WxBS | 40 | 33 | 43 | 82 | 22 | 9 | 15 | n/a | 107 | 12 |

| ASIFT | 40 | 23 | 27 | 18 | 15 | 6 | 5 | 8 | 62 | 1 |

| DualBootstrap | 36 | 31 | 38 | 79 | 16 | n/a | 0 | 4 | 16 | 0 |

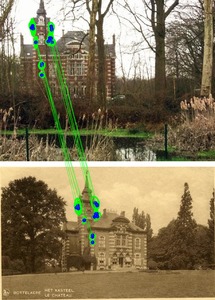

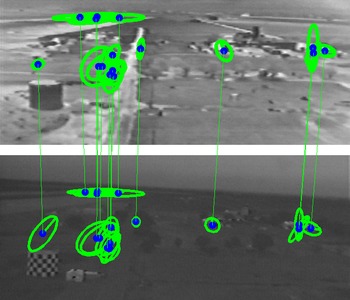

WXBS-MODS is extension designed to handle also extreme appearance changes like visible-IR, day-night, summer-winter matching in addition to the wide geometric baseline.

As one application of MODS-WXBS, we have built place recognition engine for VPRiCE Place Recognition challenge, which showns best Precision and F1 score so far and outperformed CNN-based competitors.

| [MODS] |

MODS: Fast and Robust Method for Two-View Matching.. D. Mishkin and M. Perdoch and J.Matas. CVIU, 2015 [pdf],

@article{Mishkin2015MODS,

title = "MODS: Fast and robust method for two-view matching ",

journal = "Computer Vision and Image Understanding ",

volume = "",

number = "",

pages = " - ",

year = "2015",

note = "",

issn = "1077-3142",

doi = "http://dx.doi.org/10.1016/j.cviu.2015.08.005",

url = "http://www.sciencedirect.com/science/article/pii/S1077314215001800",

author = "Dmytro Mishkin and Jiri Matas and Michal Perdoch",

keywords = "Wide baseline stereo",

keywords = "Image matching",

keywords = "Local feature detectors",

keywords = "Local feature descriptors "

}

|

| [MODS-WxBS] |

WxBS: Wide Baseline Stereo Generalizations. D. Mishkin and M. Perdoch and J.Matas and K. Lenc. In Proc BMVC, 2015 [pdf],

@InProceedings{Mishkin2015WXBS,

author = {{Mishkin}, D. and {Matas}, J. and {Perdoch}, M. and {Lenc}, K. },

Booktitle = {Proceedings of the British Machine Vision Conference},

Publisher = {BMVA},

title = "{WxBS: Wide Baseline Stereo Generalizations}",

year = 2015,

month = sep,}

|

| [MODS-VPRiCE] |

Place Recognition with WxBS Retrieval. D. Mishkin and M. Perdoch and J.Matas, CVPR 2015 Workshop on Visual Place Recognition in Changing Environments [pdf],

@InProceedings{Mishkin2015VPRICE,

author = {{Mishkin}, D. and {Perdoch}, M. and {Matas}, J.

},

title = "{Place Recognition with WxBS Retrieval}",

book = {CVPR 2015 Workshop on Visual Place Recognition in Changing Environments},

year = 2015,

month = jun,

}

|

| |

Linux C++ source code, Windows C++ source code, Linux 64-bit binaries(old version). Sources code for MODS and MODS-WXBS are implemented in single package, which is congifurable via ini-files. |

| |

MODS-IVCNZ binaries (executable files, 64-bit Linux, Windows). 2013 year version, provided for results reproducability. |

| |

The Extreme View Dataset. EVD is a set of 15 image pairs with ground truth homographies (image pairs - "adam", "graf" and "there" are from [1] and [2]). |

| |

The Tentative correspondences on Extreme View Dataset. For RANSAC benchmarking. |

| |

The Extreme Zoom Dataset. EZD is a 6 image sets with incleasing zoom factor from general scene view to focusing on single detail. |

| |

The Wide (multiple) Baseline Dataset v.1.1. (new!) 34 image pairs, simultaneously combining severel nuisance factors: geometry, illumination, modality, etc. Cleaned-up and extended version of the WxBS dataset. |

| |

The Wide (multiple) Baseline Dataset. (old)31 image pairs, simultaneously combining severel nuisance factors: geometry, illumination, modality, etc. |

| |

The Wide Single Baseline Dataset. 40 image pairs, grouped by nuisance factor: geometry, illumination, appearance, modality, with pre-detected local features by Hessian-Affine, Edge FOCI and MSER. Same, but with pre-extracted 65x65 local patches. W1BS benchmark code in python. |

| |

The map2photo dataset - 6 pairs, where one image is satellite photo and second - map of the same area. |

| [1] | K. Cordes and B.Rosenhahn and J. Ostermann. Increasing the Accuracy of Feature Evaluation Benchmarks Using Differential Evolution. In Proc of SSCI, 2011. |

| [2] | G. Yu, and J.-M. Morel ASIFT: An Algorithm for Fully Affine Invariant Comparison. IPOL vol. 2011. http://dx.doi.org/10.5201/ipol.2011.my-asift |

| [3] | D. Mishkin and M. Perdoch and J.Matas. Two-View Matching with View Synthesis Revisited. In Proc. of IVCNZ, 2013, 436-441. [pdf] |