Speaker Notes

Press s to open speaker notes. The sole presentation was not intended to be self contained.

Rolling Shutter Camera Synchronization with Sub-millisecond Accuracy

Matěj Šmíd, Jiří Matas

Center for Machine Perception

Czech Technical University Prague

smidm@cmp.felk.cvut.cz

Multiple Cameras

- are common

- synchronization is required

- are the only solution for some applications

Tracking Application

Ice Hockey Dataset

The Core Idea

synchronize using lighting changes affecting the whole scene

image overlap not required (!)

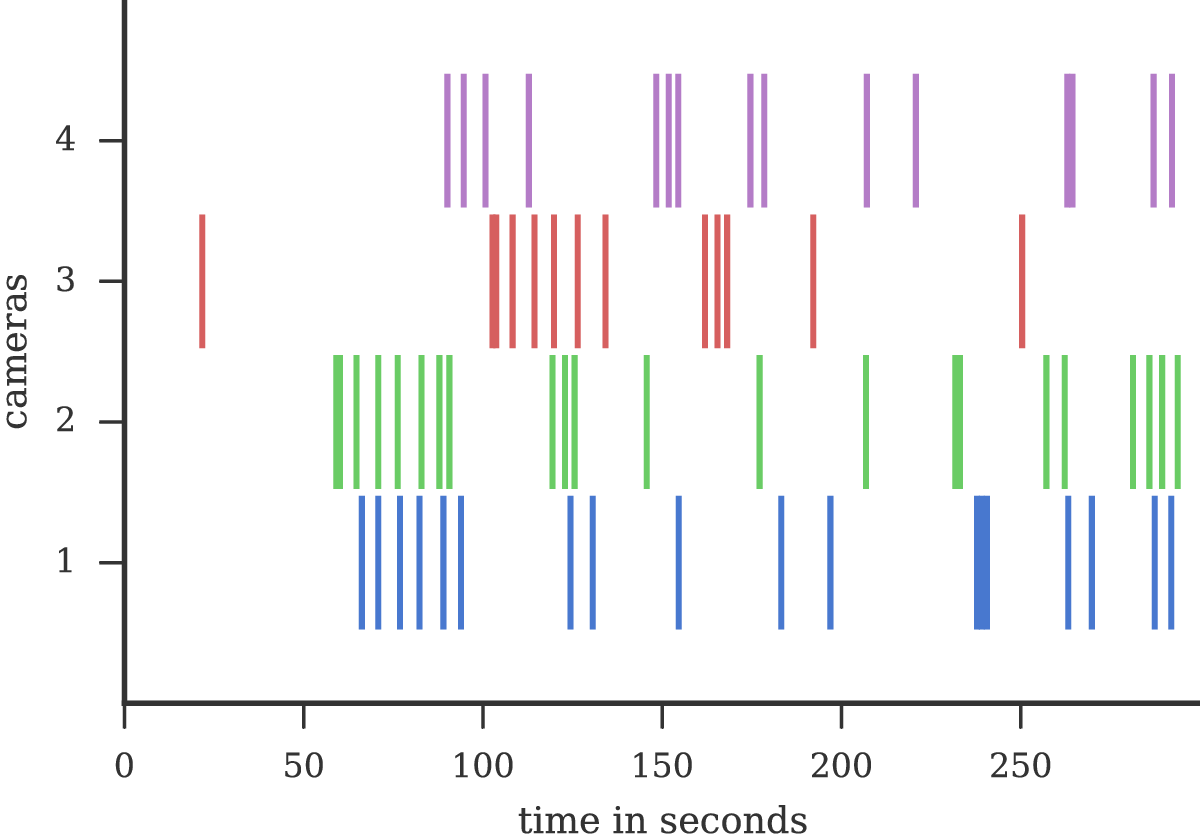

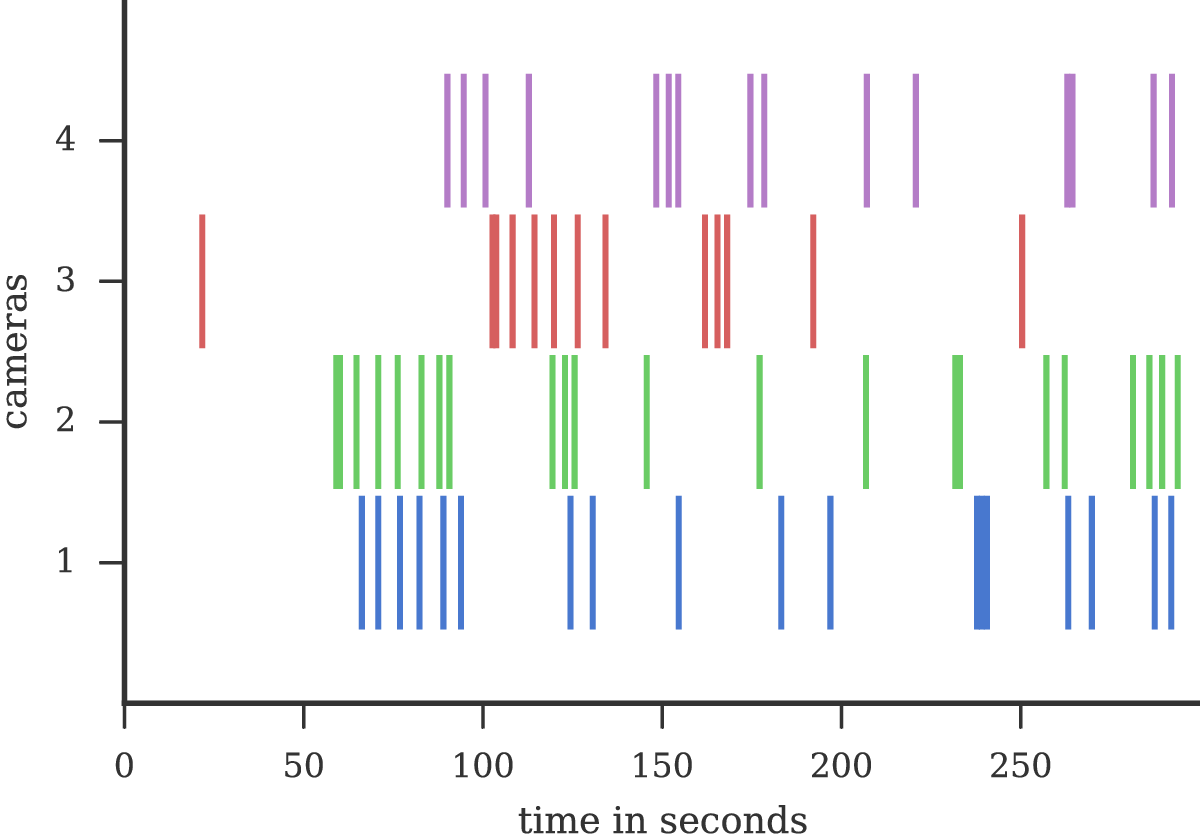

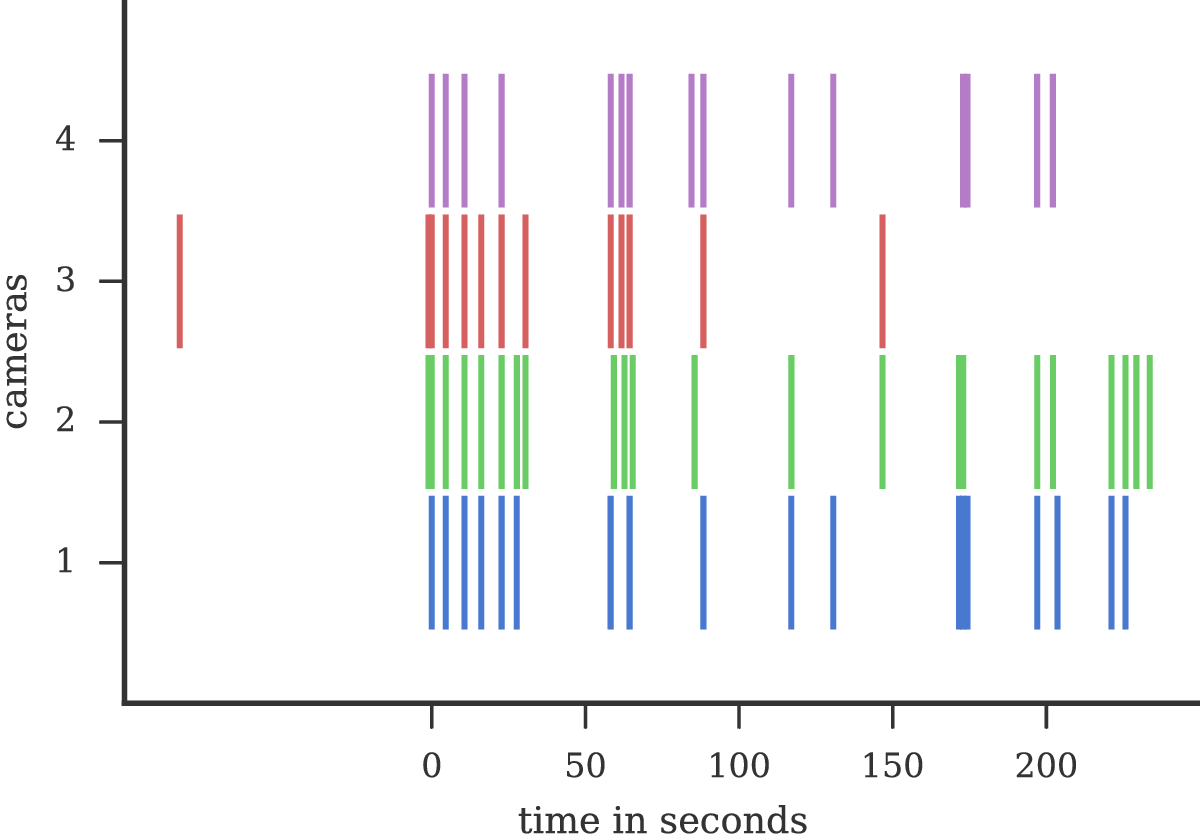

Detected Events

Synchronization by Mapping Frames

- choose reference camera

- synchronize by adding \(n\) whole frames

- assumption: same fps

- \(f_\mathrm{ref} = f + n\)

- \(f_\mathrm{ref}\) reference camera frame nr.

- \(f\) second camera frame nr.

Doesn't work

Frame Drops

- common phenomena

- often ignored

- cause: high load, lost packets

- synchronization error cumulative

Video Timing

$ ffprobe -select_streams v

-show_entries frame=

best_effort_timestamp_time

video.mp4

frame,0.000000

frame,0.040000

frame,0.080000

frame,0.120000

frame,0.160000

frame,0.200000

frame,0.240000

frame,0.280000

frame,0.320000

frame,0.360000

frame,0.400000

frame,0.440000- use frame timestamps instead of indices

- available:

- video containers

- streaming protocols

- why is it ignored?

Timestamp based Sync

- fps invariant, frame drops resistant

- \(\color{red}{t}_\mathrm{ref} = \color{red}{t} + \Delta \color{red}{t}\)

- \(t\) frame timestamp

- \(\Delta t\) time shift

Rolling Shutter

- frame rows not exposed simultaneously

- small delay between consecutive rows

- disadvantage: image distortion

- advantage: high-speed optical line sensor

Distortion

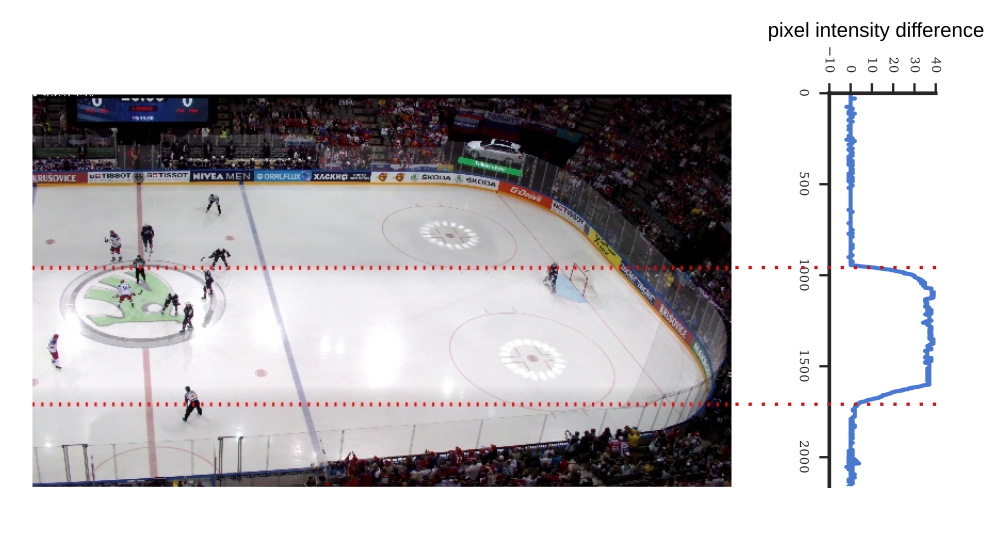

Lighting Change

- e.g. flash, room light switch

- profile: line-wise median, difference between consecutive images

Going Sub-frame

- we can associate time of a row in arbitrary camera to a time of a row in the reference camera

- \(t'_\mathrm{ref} = t + \color{red}{r T_\mathrm{row}} + \Delta t\)

- \(r\) row number

- \(T_\mathrm{row}\) time per row

Doesn't work precisely

Clock Skew

- millisecond scale + image sensor clock generator inaccuracy

- compensation needed (\(\beta\))

- \(t''_\mathrm{ref} = \color{red}{\beta} t + r T_\mathrm{row} + \Delta t\)

- \(\beta\) relative clock skew to the reference camera

Sync Parameters

- lighting event \((t, r)\) frame time, row pair

- pair of corresponding events in 2 cameras = 1 equation

- system of linear equations

- least squares solution

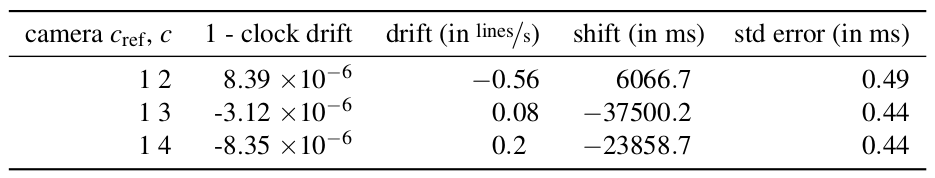

Results

Results

- residual errors < 1ms

- ice hockey dataset, 5 minutes, 4 cameras

Open Source

import flashvideosynchronization

sync = flashvideosynchronization.FlashVideoSynchronization()

sync.detect_flash_events(filenames)

matching_events = {1: 3, 3: 2, 2: 8, 4: 2}

offsets = {

cam: sync.events[cam][matching_events[cam]]['time']

for cam in cameras}

# synchronize cameras: find parameters of transformations

# that map camera time to reference camera time

sync.synchronize(cameras, offsets, base_cam=1)

# get sub-frame sychronized time for camera 1, frame 10 and row 100

print sync.get_time(cam=1, frame_time=timestamps[1][10], row=100) https://github.com/smidm/flashvideosynchronization

Summary

- sub-millisecond accuracy for rolling shutter cameras

- requirement: global detectable lighting changes

- open source

http://cmp.felk.cvut.cz/~smidm/flash_synchronization