Notes on usage of gsmo:

- Function is the part of STPR toolbox, but because it must be compiled from gsmo_mex.c into matlab binary module (MEX), which may differ for different systems. Compiled module can be downloaded form here. Attention: In MATLAB this function should be called as gsmo (help is available by typing `help gsmo'), not gsmo_mex.

- It is usefull to set `options.verb' (see help), so that you see the progress of optimization.

- It is also usefull to bound the maximal number of iterations (parameter `options.tmax'). The reason is, that when primal QP task is not feasible (there is no parameters satisfying constraints, the dual problem is not bounded (see [2]) and the algorithm will infinitely diverge.

- You should check output parameter `stat' to detect if the optimization finished successfully. If it was not successful, we should figure out why.

ppatterns(data); pline(model); hold on; plot(X(1,I),X(2,I),'ks','MarkerSize',12);for training samples data.X, labels data.y and classifier given by model.W, model.b and support vector indices I.

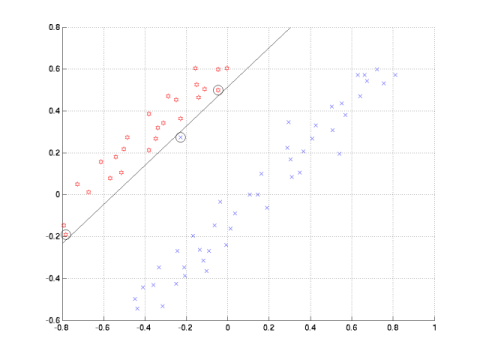

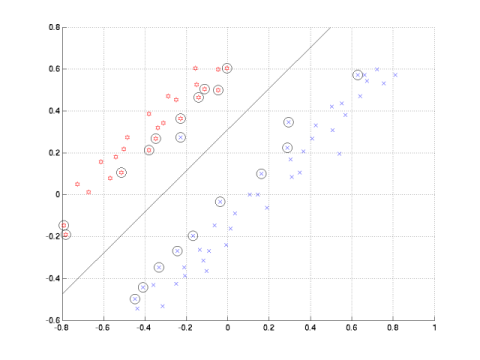

| C = Inf | C = 1 |

|

|

|

For your control, the numerical results on data_33rpz_cv08-separable:

- model.W = [-24.3726; 23.9039]; model.b = -22.1428; % C=inf

- model.W = [ -2.3024; 2.1573]; model.b = -1.6498; % C=1

x = (sum of pixel values in

the left half of image) - (sum of

pixel values in the right half of

image)

y = (sum of pixel values in the upper half of image) - (sum of pixel values in the lower half of image)

Normalize the features to interval <-1,1> before learning SVM.